Concept 4: Real-time A/B Testing

For decades, restaurant operators have made menu decisions based largely on intuition, industry "best practices," and personal preferences. While experience certainly has value, the reality is that customer behavior doesn't always align with our expectations. In fact, a landmark study published in the Cornell Hospitality Quarterly found that restaurant operators accurately predicted customer preferences only 59% of the time, little better than a coin flip (Susskind et al., 2019).

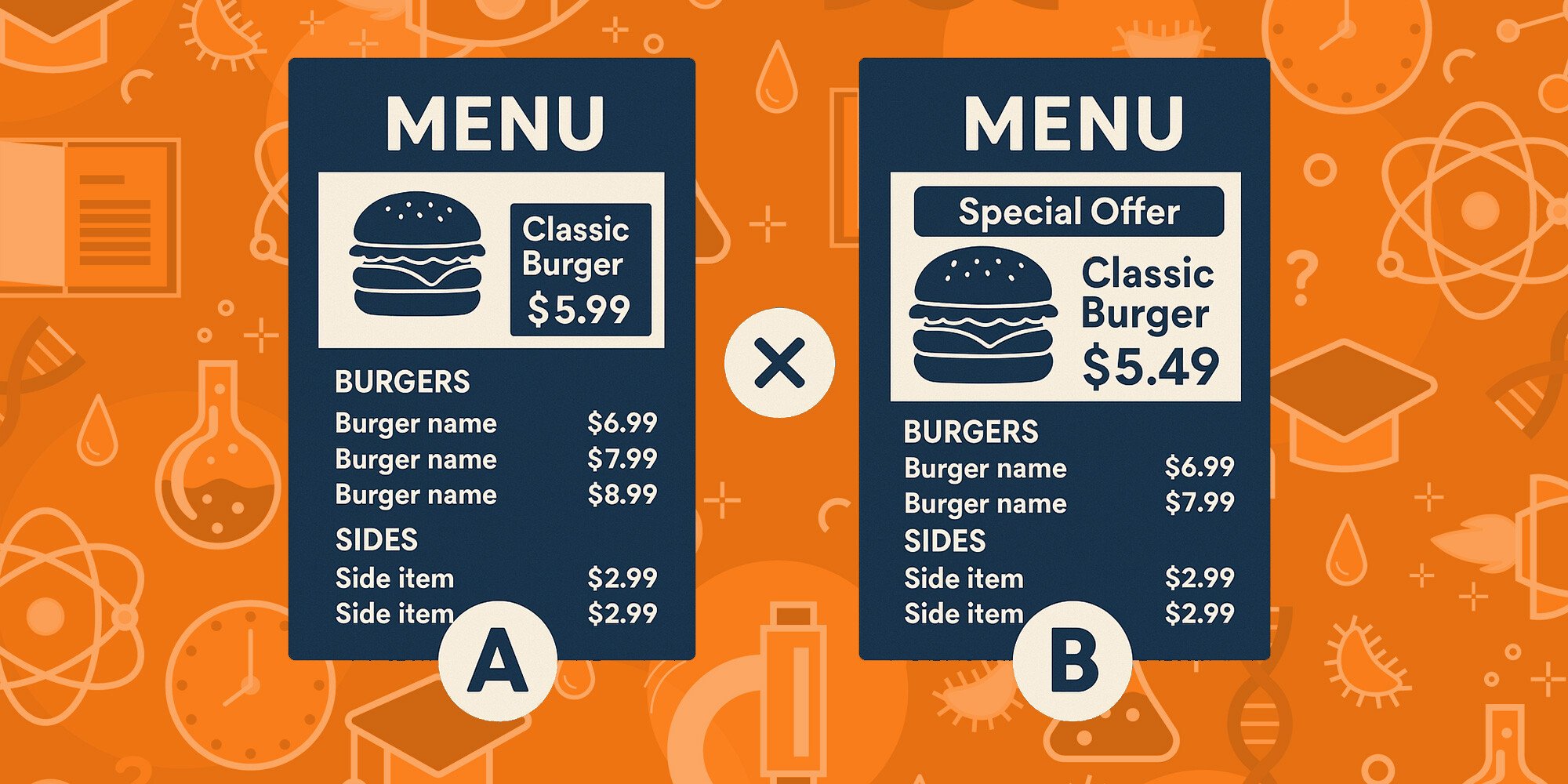

Digital menu boards fundamentally change this dynamic by enabling rapid testing of different approaches to determine what actually drives results. Rather than debating whether a particular menu layout or pricing strategy will work, you can test alternatives in real-world conditions and let customer behavior provide definitive answers.

Understanding A/B Testing Fundamentals

At its core, A/B testing (sometimes called split testing) involves comparing two versions of a menu element to see which performs better against your defined objectives. The approach originates from scientific method principles and has become standard practice in fields like e-commerce, where Amazon alone reportedly runs over 10,000 A/B tests annually (Kohavi & Thomke, Harvard Business Review, 2017).

For restaurant operators with digital menu boards, A/B testing offers a systematic way to:

-

Test specific hypotheses about menu design, pricing, or messaging

-

Measure the impact of changes on key performance metrics

-

Make evidence-based decisions rather than relying on assumptions

-

Continuously optimize your menu's performance

The Business Case for Menu A/B Testing

The financial impact of systematic menu testing can be substantial. According to research published in the International Journal of Hospitality Management (Taylor & DiPietro, 2018), restaurants implementing data-driven menu optimization through controlled experiments saw an average increase of 3-5% in revenue per available guest, with some tests yielding improvements of over 20% for specific menu categories.

This impact stems from several factors:

-

Discovering hidden preferences that customers may not articulate but demonstrate through behavior

-

Removing operational friction by identifying and resolving menu elements that cause confusion

-

Optimizing price elasticity to determine the exact price points that maximize profit

-

Increasing conversion rates for high-margin items and add-ons

Digital Menu Boards: The Ideal A/B Testing Platform

- Simultaneous testing across multiple locations

- Controlled variables with all other factors remaining constant

- Rapid iteration based on incoming results

Key Menu Elements to Test

Digital menu boards allow you to test virtually any element of your menu presentation.

Here are high-impact areas to prioritize:

1. Visual Layout and Organization

- Category ordering and grouping

- Grid vs. list presentation

- Product image size and placement

- White space utilization

2. Item Descriptions and Naming

- Descriptive language (indulgent vs. health-focused)

- Length of descriptions

- Naming conventions (branded vs. descriptive)

- Call-out techniques (badges, icons, etc.)

3. Pricing Presentation

- Price positioning relative to item description

- Font size and style for prices

- Dollar signs vs. no dollar signs

- Decimal points vs. rounded whole numbers

4. Product Imagery

- Images vs. no images for specific categories

- Image size and quality

- Background color and contrast

- Food styling approaches

Implementing a Successful A/B Testing Program

Step 1: Establish Clear Hypotheses

Step 2: Design Controlled Experiments

- Location-based testing: Run Version A in some locations and Version B in others

- Advantage: Clean separation between test groups

- Requirement: Locations must have similar characteristics (volume, customer demographics, etc.)

- Time-based rotation: Alternate between versions on different days or weeks

- Advantage: Controls for location-specific variables

- Requirement: Consistent tracking of time periods to ensure comparable conditions

- Simultaneous split: Run both versions simultaneously on different screens within the same location

- Advantage: Identical conditions for both test groups

- Requirement: Careful analysis to ensure customer groups are comparable

Step 3: Determine Appropriate Sample Size

- At least 2 weeks of data per test

- Minimum of 1,000 transactions per version

- Testing during comparable business periods (e.g., don't compare weekends to weekdays)

Step 4: Integrate Measurement Systems

- Item-specific sales counts

- Average check size

- Category attachment rates

- Daypart performance differences

- Conversion rates (views to purchase)

Step 5: Analyze Results and Iterate

- Look for statistically significant differences (typically p < 0.05)

- Segment results by relevant factors (daypart, customer type, etc.)

- Consider both primary and secondary effects

- Document learnings for future tests

Advanced A/B Testing Strategies

Multivariate Testing

- Different item images

- Various price points

- Multiple description styles

Sequential Testing

- Test category organization (identify winner)

- Test imagery within winning organization (identify winner)

- Test price presentation within winning organization and imagery

Dynamic Testing

- Automatically feature highest-performing items more prominently

- Adjust pricing based on current inventory levels and historical purchase patterns

- Modify imagery based on weather conditions or time of day

Common Pitfalls and How to Avoid Them

1. Testing Too Many Variables Simultaneously

2. Insufficient Sample Size

3. Selection Bias

4. Ignoring Operational Impact

Your A/B Testing Action Plan

- Start small: Begin with a single, high-impact test

- Build infrastructure: Ensure proper measurement systems are in place

- Create a test calendar: Schedule regular tests to promote continuous improvement

- Document everything: Maintain a database of tests, results, and learnings

- Scale gradually: Expand testing program as your team develops expertise

Sources:

-

Susskind, A. M., Brymer, R. A., Kim, W. G., Lee, H. Y., & Way, S. A. (2019). Attitudes and perceptions about restaurant menu labeling and consumer behavior. Cornell Hospitality Quarterly, 60(3), 197-208.

-

Kohavi, R., & Thomke, S. (2017). The surprising power of online experiments. Harvard Business Review, 95(5), 74-82.

-

Taylor, J. J., & DiPietro, R. B. (2018). Experimental menu testing: A case study of applying data-driven menu engineering. International Journal of Hospitality Management, 72, 102-110.

-

QSR Magazine (2019). Panera Bread's Digital Menu Transformation.

-

Wansink, B., Painter, J., & Van Ittersum, K. (2015). Descriptive menu labels' effect on sales. Cornell Hospitality Quarterly, 56(1), 68-74.

-

Mantica, R. (2017). Price presentation and consumer behavior on digital menu boards. Digital Signage Today.

-

Hoover, D. (2018). Impact of food imagery on consumer perception and purchase behavior. Kansas State University Food Innovation Center.

-

Nation's Restaurant News (2020). McDonald's Digital Menu Testing Framework.

-

Restaurant Technology Network (2021). Best Practices Guide: Digital Menu Optimization.

-

Fast Company (2021). Taco Bell's Digital Innovation Strategy.

-

Wilson, A. (2016). Multivariate menu testing methodologies. Journal of Retailing, 92(2), 234-246.

-

National Restaurant Association (2021). State of the Restaurant Industry Report..